A photo lands in your inbox. It looks completely real, a person, a scene, a moment frozen in time. But something feels slightly off. You can't name it. You just feel it. That nagging doubt has a name in 2026: AI generated image suspicionand it's more valid than ever. AI image generators like Midjourney, DALL·E, and Stable Diffusion have become so advanced that millions of synthetic photos circulate daily across news feeds, dating profiles, job applications, and social media. Knowing how to tell if an image is AI generated is no longer a niche skill. It's digital literacy.

- AI generated images often contain subtle but identifiable visual artifacts in hands, teeth, backgrounds, and lighting.

- Metadata inspection can reveal whether an image was processed by an AI pipeline.

- Free tools like WeDetectAI can analyze an image and return a confidence score in seconds.

- No single method is 100% reliable; combining visual inspection with a detector tool gives the most accurate result.

- AI image detection accuracy has improved significantly, with leading models now exceeding 90% accuracy on synthetic media.

What Does “AI Generated Image” Actually Mean?

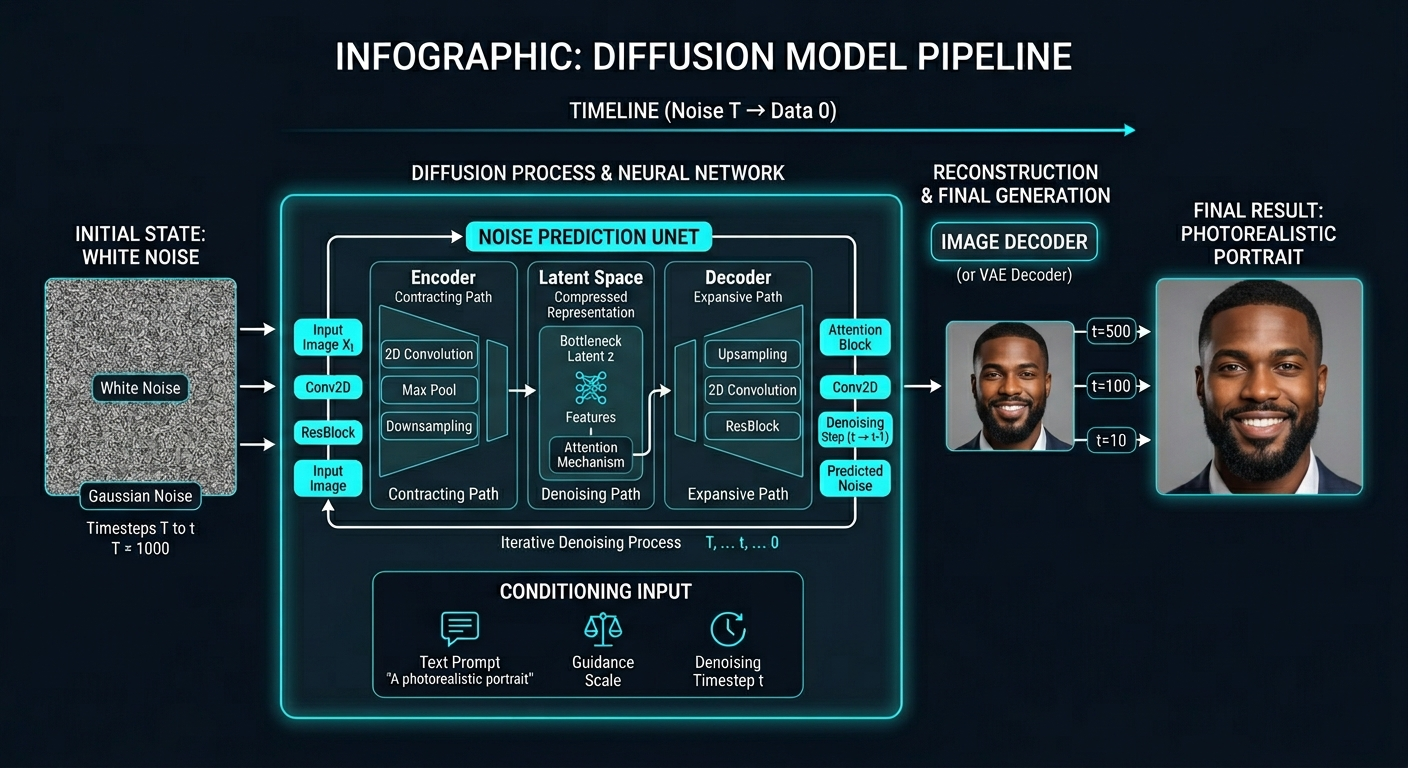

An AI generated image is any visual content produced entirely or substantially by an artificial intelligence model, rather than captured by a camera or drawn by a human. The most common systems behind these images are Generative Adversarial Networks (GANs), diffusion models(used by Midjourney, DALL·E 3, and Stable Diffusion), and variational autoencoders (VAEs). Each of these architectures generates pixels by learning patterns from billions of real photographs and then synthesizing entirely new visuals from statistical noise.

In practical terms, this means AI generated images have never existed in the physical world. No lens captured them. No shutter clicked. They are the output of a mathematical process, and that process, however sophisticated, leaves behind traces that trained eyes and detection algorithms can identify.

The scale of the problem matters. According to a 2024 report by the AI firm Clarity, over 90 billion AI images were generated in 2023 alone. That number has climbed sharply into 2026. A significant portion of those images end up online, misrepresenting events, fabricating identities, or manipulating public opinion.

Further reading: How AI image generators work — contextually relevant for readers who want to understand the technology behind what they're detecting.

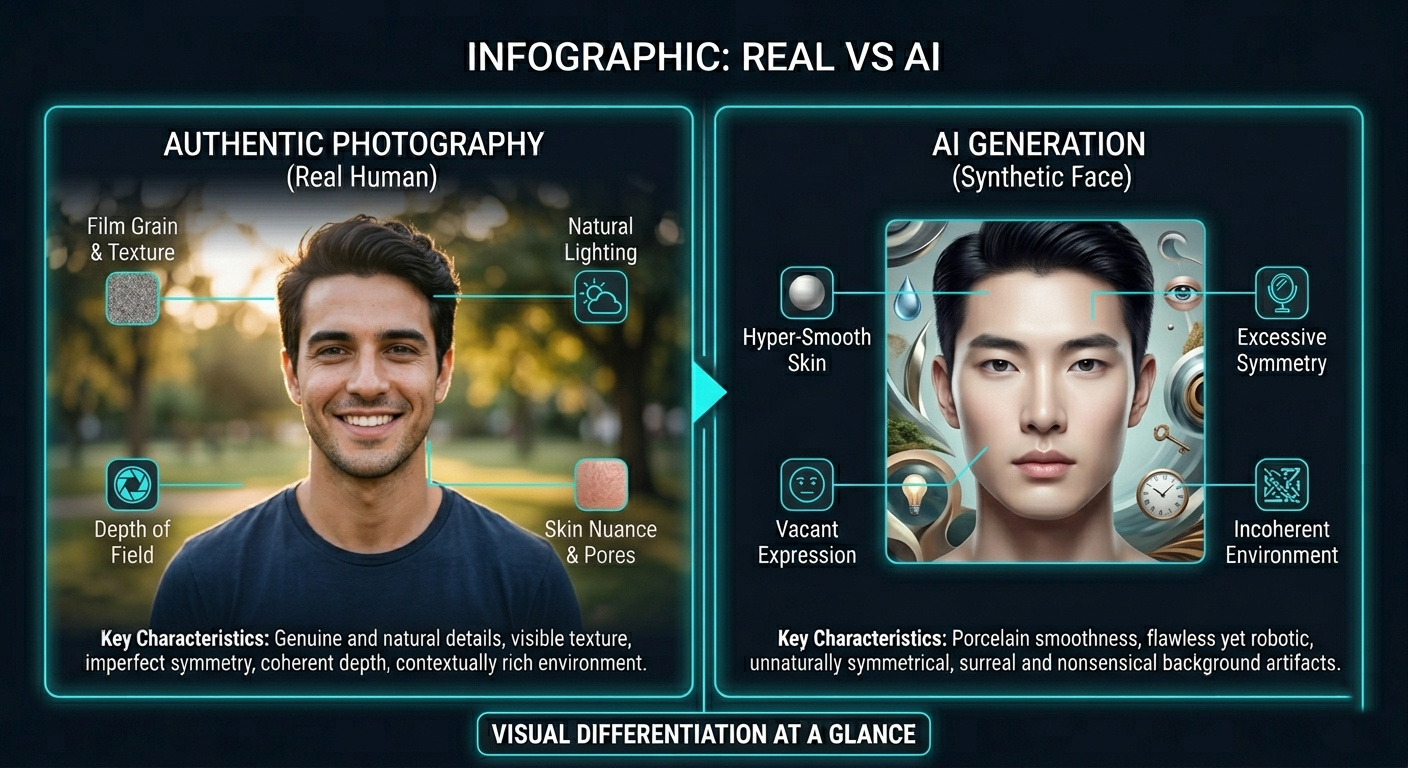

How to Tell If an Image Is AI Generated: Visual Clues

The fastest method and one that requires zero tools is careful visual inspection. AI image generators are extraordinarily good at producing convincing faces, landscapes, and objects. But they consistently struggle with specific types of content. Once you know what to look for, patterns become obvious.

Hands, Fingers, and Teeth

This is the most reliable visual tell in 2026. AI models have historically struggled to render human hands accurately, and while models have improved, errors still appear, extra fingers, fused knuckles, fingers that taper at unnatural angles, or thumbs that appear on the wrong side of a hand. Similarly, teeth in AI images often appear as an undifferentiated white mass, lacking individual tooth definition, gum texture, or realistic shadows between teeth.

If an image contains a person, zoom in on the hands and mouth first. These regions require a level of structural understanding that generative models still approximate rather than master.

Backgrounds and Edges

AI generators tend to produce backgrounds that are aesthetically pleasing but physically incoherent. Look for:

- Text on signs, posters, or clothing that is garbled, misspelled, or illegible. AI models treat text as texture rather than meaning.

- Objects that blend into one another at edges, a hair strand that merges with a wall, glasses frames that pass through ears.

- Lighting that is inconsistent, a subject lit from the left while background shadows fall to the left as well.

- Repeating background patterns that tile unnaturally.

Skin, Hair, and Fabric Texture

Real photographs capture micro-details: individual pores, the way light catches individual strands of hair, the precise drape of fabric under gravity. AI generated images often render these as smooth, hyper detailed but structurally uniform surfaces. Skin looks airbrushed. Hair looks volumetric but lacks flyaways or individual strand variation. Fabric patterns sometimes warp or fail to follow the folds of a garment coherently.

Symmetry That Is Too Perfect

Human faces are naturally asymmetrical. AI faces, especially those generated by face specific models, tend toward an eerie symmetry. Eyes are exactly level, features are centered, and the proportional relationships between facial elements conform too precisely to idealized standards. This is sometimes described as the “uncanny valley” of AI portraiture faces that are beautiful but somehow inhuman.

AI faces tend toward an eerie symmetry, eyes exactly level, features precisely centered, beautiful but somehow inhuman.

The Technology Behind AI Image Detection

Visual inspection is a starting point, but it has real limits, especially as AI models improve. Automated AI image detection works by training machine learning models on large datasets of both real and AI generated images, then using those models to identify statistical patterns invisible to the human eye.

Frequency Domain Analysis

One of the most powerful detection techniques is frequency domain analysis. When an image is transformed using a Fast Fourier Transform (FFT), it reveals the frequency components that make up the image. Real photographs have frequency signatures shaped by lens optics, sensor noise, and the physics of light. AI generated images have frequency signatures shaped by the mathematical operations of neural networks, and these signatures differ in measurable, detectable ways.

Research published by teams at UC Berkeley and MIT has shown that GAN generated images leave consistent artifacts in the frequency domain, particularly at higher frequencies, that are detectable even when the image looks perfectly realistic to human observers. Read the foundational research: Detecting and Simulating Artifacts in GAN Fake Images (arXiv).

EXIF Metadata Inspection

Every digital photograph captured by a real camera embeds EXIF metadata a hidden layer of data that records the camera make and model, lens specifications, shutter speed, ISO, GPS coordinates, and timestamp. AI generated images, by contrast, typically contain no EXIF data, or contain metadata that is generic, inconsistent, or obviously synthesized. Checking EXIF data is not foolproof, metadata can be stripped from real photos or spoofed, but its absence is a meaningful signal. Tools like ExifTool can surface this data in seconds.

Convolutional Neural Network (CNN) Classifiers

The most accurate detection tools including WeDetectAI use fine tuned convolutional neural networks trained specifically to distinguish AI generated images from real photographs. These models analyze images at the pixel level, identifying micro-patterns in noise distribution, edge coherence, and color channel relationships that betray AI synthesis.

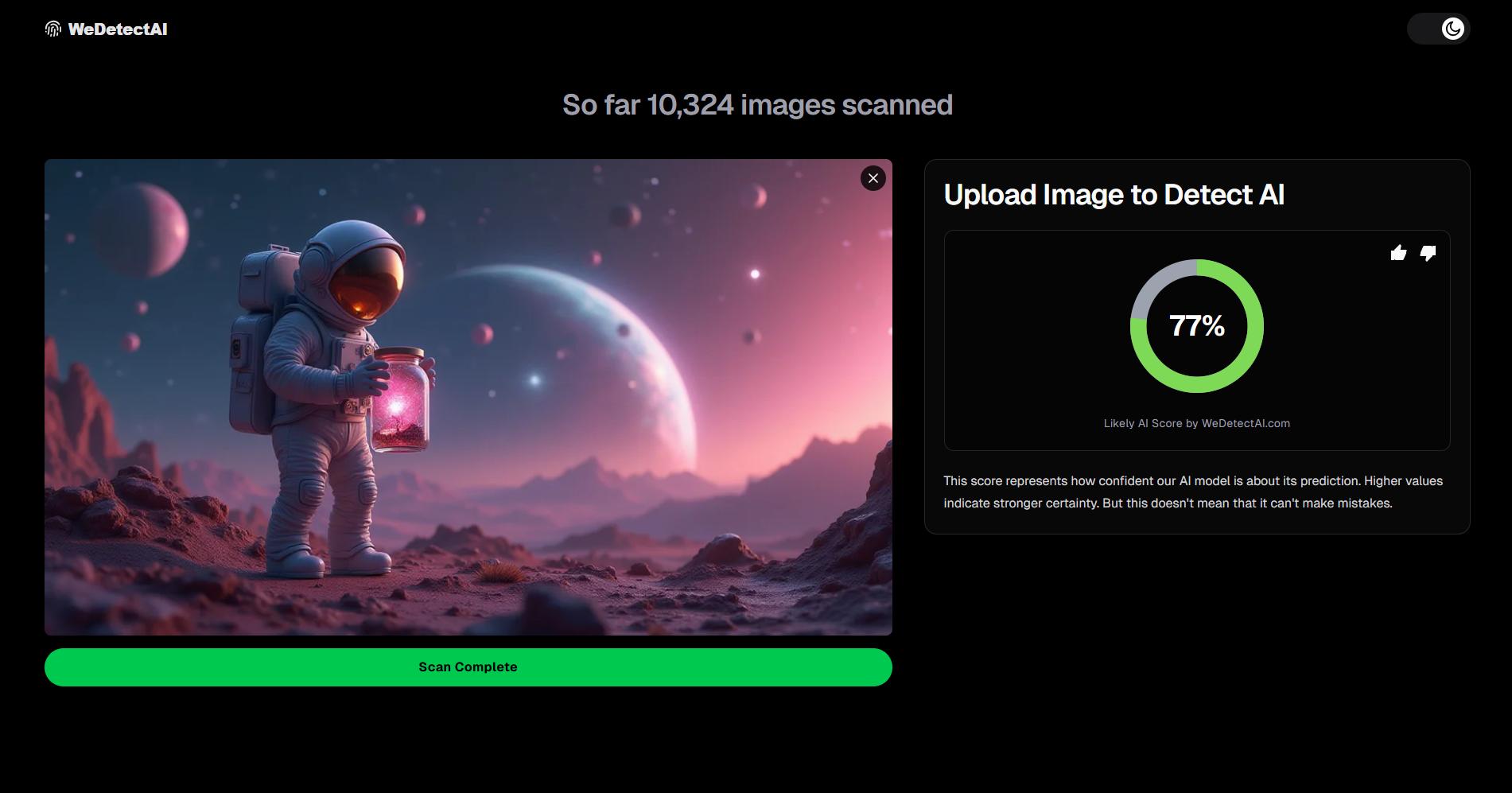

WeDetectAI's detection model returns a confidence score from 0 to 100%, indicating the probability that a given image is AI generated, giving users a quantified, actionable result rather than a binary guess.

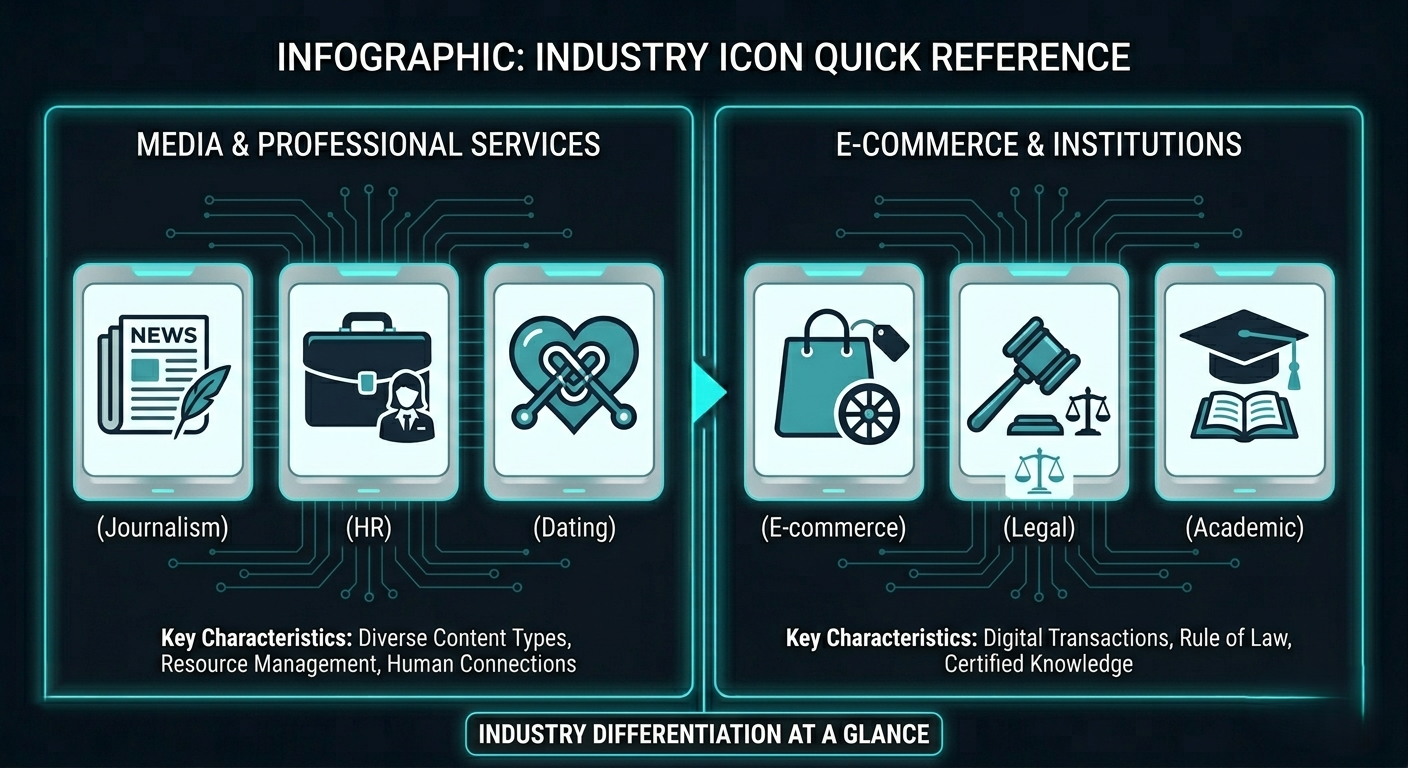

Why It Matters: Real World Use Cases for AI Image Detection

Detecting AI generated images is not an abstract technical exercise. It has direct, high stakes applications across a wide range of industries and everyday situations.

- Journalism and fact-checking: News organizations now face routine submission of AI generated evidence images claiming to document real events. The Reuters Institute's 2025 Digital News Report flagged synthetic image misinformation as one of the top three trust challenges facing newsrooms globally.

- HR and hiring: Recruiters on LinkedIn increasingly encounter AI generated profile photos, smooth, professional headshots that belong to no real person. Verifying whether a candidate's photo is real is now a standard due diligence step at many firms.

- Dating apps and social platforms: Romance scammers have adopted AI generated profile pictures at scale. A 2024 Federal Trade Commission report found that romance scam losses in the US exceeded $1.1 billion, with fake profile images being a core element of most schemes.

- E-commerce and product listings: AI generated product photography, showing items that look different from what ships, is a growing consumer protection issue on major marketplaces.

- Legal and insurance contexts: AI generated images are increasingly submitted as fabricated evidence in insurance claims and legal disputes, requiring authenticated forensic analysis.

- Academic integrity: Educators and publishers now check submitted images for AI generation, particularly in scientific and photographic contexts where authentic documentation is essential.

Try It Free

Upload any image and get an AI detection confidence score in seconds. No account required.

Detect AI Images Free at WeDetectAI →Real vs AI Image: How Detection Tools Compare

Not all AI image detectors are built equally. Here's how the main approaches and tools stack up across the criteria that matter most:

| Feature | Manual Visual Inspection | Metadata / EXIF Tools | WeDetectAI |

|---|---|---|---|

| Speed | Slow (minutes per image) | Fast (seconds) | Instant |

| Accuracy | Low Medium (human bias) | Low (easily spoofed) | High (90%+ on tested datasets) |

| Works on improved AI models | Poor (models evolve) | Partial | Yes (model updated continuously) |

| Requires technical knowledge | Some | Yes | None |

| Free to use | Yes | Yes (3rd party tools) | Yes |

| Confidence score provided | No | No | Yes (0 to 100%) |

| Batch image support | No | Limited | Yes |

The table above makes clear that while visual inspection and metadata tools provide useful supplementary signals, a purpose-built AI generated image detector like WeDetectAI offers a significantly more reliable and accessible result, especially for non technical users who need a fast, trustworthy answer.

Common Myths About AI Image Detection

Misconceptions about AI generated images and how to detect them are widespread. Here are the most damaging ones and the truth behind each.

“If it looks real, it is real.”

This is the most dangerous assumption anyone can make in 2026. State of the art diffusion models produce images that are photorealistic enough to fool trained journalists and forensic analysts on first glance. Realism is no longer a reliable marker of authenticity. Systematic inspection, including running the image through a detector, is the only responsible standard.

“AI images always have weird hands or glitchy backgrounds.”

This was reliably true in 2022. It is increasingly false in 2026. Midjourney V6 and DALL·E 3 have dramatically improved hand rendering. Relying solely on obvious visual artifacts means missing a growing percentage of sophisticated synthetic images that contain no obvious flaws at all.

“Metadata always reveals AI images.”

Metadata inspection is useful but not conclusive. Real images are routinely stripped of EXIF data when uploaded to social platforms (Instagram, Twitter/X, and WhatsApp all remove metadata by default). Conversely, EXIF data can be injected into AI generated images using freely available software. Absence of metadata is a signal, not proof.

“AI image detectors are always right.”

No detector, including WeDetectAI, achieves 100% accuracy across all image types and all AI models. Detection confidence scores should be interpreted as probabilistic assessments, not verdicts. The best practice is to combine a detection tool's confidence score with visual inspection and contextual judgment, particularly for high stakes decisions.

“Only bad actors create AI generated images.”

AI image generation has entirely legitimate uses, creative work, product visualization, advertising, concept art, and more. The problem is not AI images themselves but undisclosed or deceptive use of them in contexts where authenticity is implied or expected.

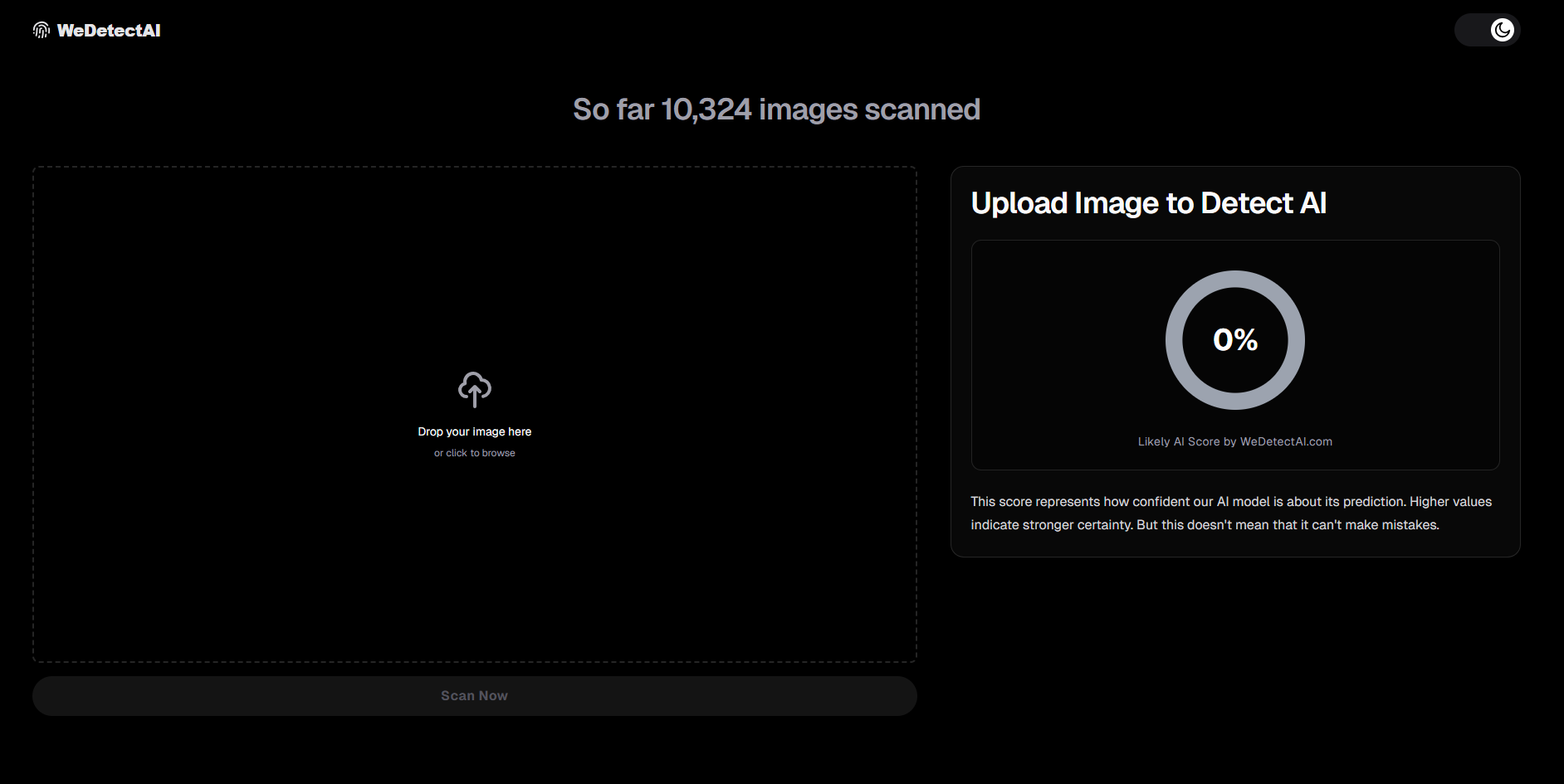

How to Detect AI Generated Images Using WeDetectAI

The fastest and most reliable way to check whether an image is AI generated is to run it through a purpose-built detection tool. Here's how to do it with WeDetectAI in under a minute.

Go to WeDetectAI.com

Open your browser and navigate to wedetectai.com. No account creation, no subscription, no personal information required. The detector is available immediately on the homepage.

Upload or Paste the Image

You have three input options:

- Drag and drop the image file directly into the upload zone.

- Click to browse and select a file from your device (supports JPG, PNG, WEBP).

- Paste an image URL if the image is hosted online, and WeDetectAI will fetch and analyze it directly.

For best results, use the highest-resolution version of the image available. Heavily compressed or resized images can reduce detection accuracy.

Let WeDetectAI Analyze the Image

WeDetectAI's detection engine processes the image through its neural network pipeline. Analysis typically completes in under five seconds. During this time, the model examines pixel-level noise patterns, frequency domain signatures, edge coherence, and color channel statistics, all in the background, invisibly to the user.

Read Your Confidence Score

The result screen displays a confidence score from 0 to 100%, indicating the probability that the image is AI generated.

Apply Context and Decide

Use the confidence score alongside your own visual inspection and the context in which you found the image. If the score is high and the image is being used to make a factual claim, verify through additional sources before sharing or acting on it.

Further reading: Understanding WeDetectAI confidence scores — helps users interpret results accurately and avoid false positives.

Frequently Asked Questions

How accurate are AI image detectors in 2026?

The best AI image detectors in 2026 achieve accuracy rates above 90% on benchmark datasets of known AI generated and real images. However, accuracy varies significantly depending on the AI model used to generate the image, any post processing applied, and image resolution. No detector is infallible, results should always be considered alongside other context. WeDetectAI continuously updates its detection model to keep pace with the latest generation tools.

Can AI image detection tools be fooled?

Yes. Adversarial techniques, including specific types of noise injection and post processing filters, can reduce the effectiveness of detection models. This is an active area of research on both sides. The practical takeaway is that detection should be treated as a probabilistic tool, not a court admissible proof. That said, casual manipulation by non technical users rarely defeats well trained detection models.

Do social media platforms strip metadata from images?

Yes. Instagram, Facebook, Twitter/X, WhatsApp, and most major platforms automatically strip EXIF metadata from images upon upload as part of their image optimization pipeline. This means a real photograph shared via social media will appear to have no camera metadata, which is why metadata absence alone cannot be used to identify AI generated images shared on these platforms.

Is there a free AI image detector I can use right now?

Yes. WeDetectAI at wedetectai.com is free to use with no account required. Upload any image and receive a confidence score in seconds. It supports JPG, PNG, and WEBP formats, as well as direct URL analysis for images hosted online.

What's the best way to tell if a face is AI generated?

Start with visual inspection of the hands, teeth, ears, and background. Then look for symmetry that seems unnatural, skin texture that appears airbrushed, and any garbled text near the subject. For a more reliable result, run the image through an AI image detector like WeDetectAI and check the confidence score alongside your visual assessment.

Conclusion

Asking “is this image AI generated?” is one of the most important questions you can ask in 2026, whether you're a The tools to answer that question exist, they're accurate, and they're free.

Visual inspection gives you a useful first pass. Metadata inspection adds a supplementary signal. But for a fast, reliable, and quantified answer, running the image through a purpose-built detection engine is the gold standard. WeDetectAI was built precisely for this moment, to give anyone, regardless of technical

Free · No Signup Required · Instant Results

Not sure if that image is real?

Upload any image and get your confidence score in under five seconds. Try WeDetectAI free no account required.

Detect AI Images Free at WeDetectAI →